Why Dynamic array sizes are doubled in capacity?

The Geometric Logic of O(1) Amortized Time

In systems programming, the dynamic array (e.g., std::vector, ArrayList, or Python's list) is a fundamental tool. But have you ever paused to ask why these containers double their capacity when they run out of space?

It might feel like "wasting" memory, but the math reveals that doubling is the brilliant way to keep our most common operation append() performing at best speed.

The Performance Killer : Linear Growth (\(+K\))

Suppose we decide to be "memory efficient" and grow our array by a fixed amount—say, 100 slots—only when we absolutely need to.

If we want to insert N elements, how many times do we have to copy data to a new memory location?

Initial State: You allocate a block of 100. You fill it up (Elements 1 to 100). Copies = 0.

The 101st Element: You have no room. You must allocate a new block of 200. You copy the 100 existing elements over.

The 201st Element: You are full again. You allocate a block of 300. You copy the 200 existing elements over.

The 301st Element: You allocate 400. You copy the 300 existing elements over.

The total work (W) is an arithmetic progression:

$$W = 100 + 200 + 300 + ... + (N - 100)$$

Factoring out the 100,

$$W = 100 \times (1 + 2 + 3 + \dots + \frac{N}{100} - 1)$$

Applying the Arithmetic Series Formula,

The sum of 1 to M is, The quadratic formula is \(\frac{M(M+1)}{2}\). Here, \(M \approx \frac{N}{100}\)

$$W \approx 100 \times \frac{(\frac{N}{100})^2}{2}$$

Using the sum of an arithmetic series, this results in:

$$W \approx 100 \times \frac{N^2}{100^2 \times 2} = 100 \times \frac{N^2}{10000 \times 2} = \frac{N^2}{100 \times 2} = \mathbf{\frac{N^2}{200}}$$

In Big-O terms, this is \(O(N^2)\) total work. If you divide that by N insertions, each individual append() costs $O(N)$. As your dataset grows, your application will get exponentially slower.

The Efficiency King: Geometric Growth(\(\times 2\))

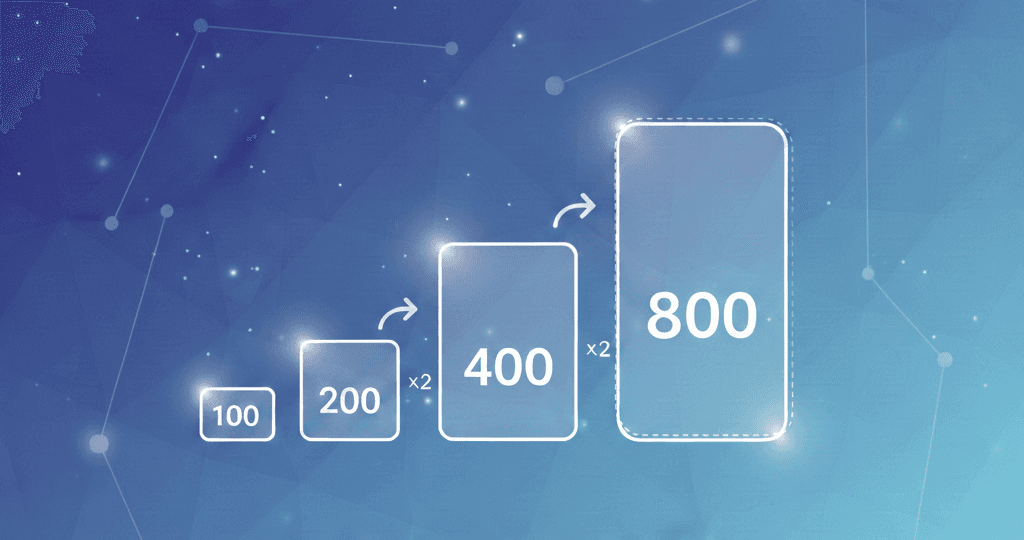

Now let's look at the same $N$ where we start with 100 slots, using the Doubling method:

Initial State: Allocate 100. Fill them. Copies = 0.

The 101st Element: Double to 200. Copy 100.

The 201st Element: Double to 400. Copy 200.

The 401st Element: Double to 800. Copy 400.

The 801st Element: Double to 1600. Copy 800.

For N elements (where N is a power of 2), our copy operations look like this:

$$W = 100 \times (1 + 2 + 4 + 8 + \dots + \frac{N}{2}) = 100 \times (2^0 + 2^1 + 2^2 + 2^3 + \dots + 2^{k-1})$$

This is a geometric series where base is 2. A key property of this series is that the sum of all previous terms is always less than the next term. Specifically:

$$W \approx 100 \times \sum_{i=0}^{x} 2^i = 2^{x+1} - 1 \approx 100 \times \sum_{i=0}^{k-1} 2^i = 2^{k-1+1} - 1\approx 100 \times \sum_{i=0}^{k-1} 2^i = 2^{k} - 1$$

$$ W \approx 2^k \approx N-100$$

The Big Reveal: Amortized ($O(1)$)

To find the average cost of a single append, we take the total work and divide it by the number of elements:

$$\frac{Total\ Work}{Total\ Elements} = \frac{N - 1}{N} \approx 1$$

Even though some appends are "expensive" (the ones that trigger a resize), the vast majority are "cheap." On average, every append costs a constant amount of time, or as we call it in software world, $O(1)$

Memory vs. Speed: The Growth Factor (\(\alpha\))

While doubling \(\alpha = 2\) is the textbook standard, the specific multiplier can vary:

Factor of 2.0: Extremely fast, but because \(1 + 2 + 4 < 8\), the memory allocator can never "reuse" the old chunks of memory you just left behind. They are always too small for the next jump.

Factor of 1.5: Used by many modern libraries (like

libc++orFBVector). Because $1.5$ is smaller, it allows the allocator to eventually "recycle" the memory from previous steps, improving cache locality and reducing fragmentation.

Strategy | Total Copies (N) | Avg. Append Cost | Memory Overhead |

|---|---|---|---|

Linear (+100) | \approx N ^2 | O(N) ~ Slow | Low |

Geometric ( \times 2 ) | \approx N | O(1) ~ Fast | Up to 50% |

Final Thought

We don't double the array because we are greedy for RAM; we double it because it turns a quadratic performance disaster into a linear, predictable success. It is one of the most successful "space-time tradeoffs" in computer science.